Sarthak Kumar Maharana

Email: sarthakmaharana9811@gmail.comCV & Google Scholar & GitHub & LinkedIn

I'm a CS PhD candidate at the University of Texas at Dallas, advised by Dr. Yunhui Guo. I'm also a part of the Data Efficient Intelligent Learning Lab. I broadly study computer vision with an emphasis on continual learning. My research is grounded in the belief that AI systems must continually adapt to the world, and not be stagnant. Lately, I'm interested in a couple of new directions:

-

(1) Designing efficient continual learning systems capable of modeling long sequences. There's a dire need to architect AI systems that scale and behave as sub-quadratic alternatives to the ubiquitous transformers.

(2) Enabling continual multimodal generation (w/ high-fidelity) for customization and personalization.

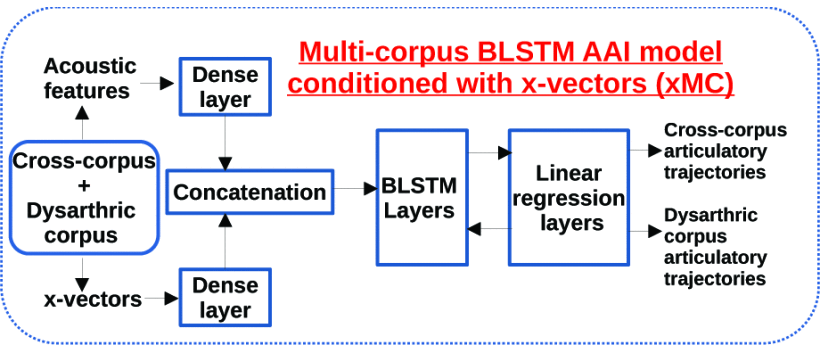

Before starting my PhD, I completed my Masters in Electrical Engineering from University of Southern California (USC) and a Bachelor's degree in Electrical and Electronics Engineering from IIIT Bhubaneswar (IIIT-Bh), India. During my Masters, I worked with Dr. Yonggang Shi. Previously, I had also worked with Dr. Shri Narayanan. As an undergraduate, I was fortunate enough to work with Dr. Ren Hongliang (NUS), Dr. Prasanta Kumar Ghosh (IISc), and Dr. Aurobinda Routray (IIT-Kharagpur).

I have published at top-tier ML/computer vision/signal processing venues such as TMLR, ICCV, NeurIPS(3x), AAAI, ECCV, and ICASSP(2x).

If some of my new research aims (hopefully) are of interest to you, I'm happy to chat, discuss, and explore potential collaborations. Feel free to contact me.